Unlock the Editor’s Digest for free

Roula Khalaf, Editor of the FT, selects her favourite stories in this weekly newsletter.

Nvidia is preparing to launch a new chip designed to speed up AI responses, breaking with its longstanding approach of pitching the same processor for many tasks.

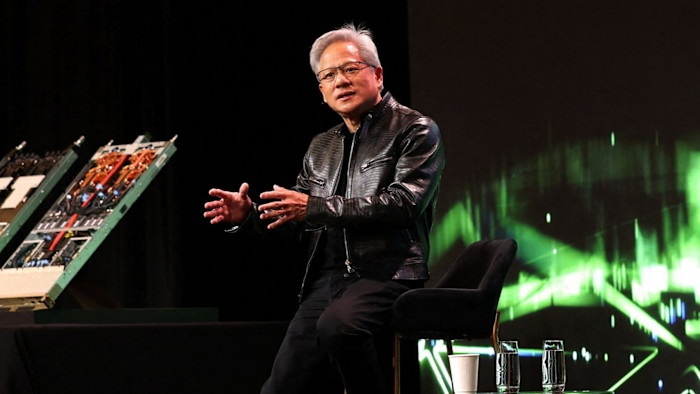

Chief executive Jensen Huang is expected to unveil a chip focused on “inference”, running rather than training models, according to people familiar with the company’s plans for its GTC developer conference next week.

It will be the first new product emerging from December’s $20bn deal to hire the founders of Groq, a start-up developing “language processing units” tuned for high-speed responses to complex AI queries.

Three months after the deal, Nvidia is expected to debut a Groq-based LPU to work alongside its forthcoming flagship Vera Rubin graphics processing unit, as part of a family of products aimed at heading off challengers and addressing new kinds of AI applications.

The move comes as the world’s most valuable company faces challenges from start-ups and customers such as Google developing their own AI chips. This week, Meta announced a new family of four inference-focused processors.

“We are entering an interesting phase that is not ‘Nvidia dominant’,” said one Silicon Valley venture investor.

Over the past three years, Nvidia’s $4.5tn market capitalisation has been built on its GPUs becoming the backbone of the generative AI industry, training models such as those behind OpenAI’s ChatGPT.

Huang has argued that a single system can be used for both training new AI models and then running the chatbots and coding tools built on them. Big Tech groups have already spent hundreds of billions deploying these systems, even as they invest in developing their own specialised AI chips.

However, the growing sophistication of AI tools such as “agentic” coding systems is forcing Huang to abandon his mantra that one GPU can handle any workload.

The Groq deal was worth about $20bn, according to people familiar with the transaction, making it one of the biggest deals in Nvidia’s 33-year history.

It includes a licensing tie-up and the hiring of key talent, including Groq founder and former Google chip executive Jonathan Ross. Groq, which was working with Samsung to manufacture its products, had previously advertised its LPUs as both faster and more efficient than Nvidia’s GPUs for inference.

Nvidia’s flagship Blackwell and Rubin systems rely on high-bandwidth memory to handle the massive data loads used by AI models.

But HBM is expensive and in increasingly short supply, as memory suppliers such as SK Hynix and Micron struggle to keep up with AI demand.

The Groq-style chip will use SRam, or static random access memory, instead of the dynamic Ram used for HBM, according to people familiar with Nvidia’s plans. SRam is more readily available and better suited to speeding up AI “reasoning” tasks.

Bank of America analysts estimate that by the time the AI data centre market hits about $1.2tn by 2030, inference will account for 75 per cent of spending, compared with about 50 per cent last year.

In a note this week they said Nvidia’s flagship event would likely unveil a “broadened AI portfolio” including an SRam-based chip from Groq. The Wall Street Journal previously reported elements of Nvidia’s plans.

There is another benefit in offering inference chips that can be more quickly and easily deployed, said June Paik, chief executive of FuriosaAI, an Nvidia competitor.

“Many enterprises want to do inference using their existing data centres, but the vast majority of today’s data centres . . . can’t support the latest liquid-cooled GPUs,” Paik said.

“The future of the data centre is not going to be a one-size-fits-all world,” said Ben Bajarin, tech analyst at Creative Strategies.

Nvidia declined to comment.

Additional reporting by Cristina Criddle in San Francisco